Since the December 2020 core update, I’m obsessed with trying to find the source of those major changes in the SERP.

8 months ago, many of us have been affected by the May core update, most of us have thought that Google would simply make a rollback and our sites would simply recover by themselves.

Unfortunately, this has never happened! After the December algorithm update, when I’ve seen that my sites haven’t recovered, I realized that it was time to evolve my SEO approach.

Creating optimized content for SEO and building links worked for years… But we are entering a new era where this is simply not enough anymore!

So, since the December algorithm update, I’m working 12h/daily nonstop, I have done a lot of reverse engineering, I’ve read a lot of posts on Facebook, Reddit, Twitter, Forums, etc… Trying to see the winners and the losers from this new update.

So here are my early thoughts about this December broad core update and what you need to do for recovering your sites of the 2020’s broad core updates.

Fix Your Content

There is absolutely no doubt… Google wants to show its users accurate and up-to-date information. If your site is full of outdated articles, you will be highly devalued by Google. More than ever, the freshness ranking factor takes importance in the Google algorithm.

Google also mentioned many times that the search intent and the content depth had a big role in its algorithm. If you want to rank now, it’s essential that you apply the following:

1. Update Your Contents

Update all your articles that are not up-to-date. Add new content, link out to authority sites that are covering the same subject as you (be sure to link out to recent articles, it will indicate to Google that your content is really up-to-date).

Add a section “Frequently Asked Questions” and be sure to cover all popular questions asked by Google users. You can look at the “People Also Ask” section in Google when researching for your main keyword.

Important, be sure that your published date is the same as the targeted year in your title. If your article is “The 5 Best SEO Tools Absolutely Worth It For 2020”, be sure that your published date is somewhere in 2020.

If you linked out to some articles published in June 2020… Be sure to set your published date after this date.

2. Delete or Repurpose Thin Contents

As mentioned above, Google devalues heavily the sites that have thin content. Content depth is another important ranking factor, so instead to have multiple articles covering a topic, repurpose all your articles into a big one. Prove to Google that your article is the best because it covers everything for the query.

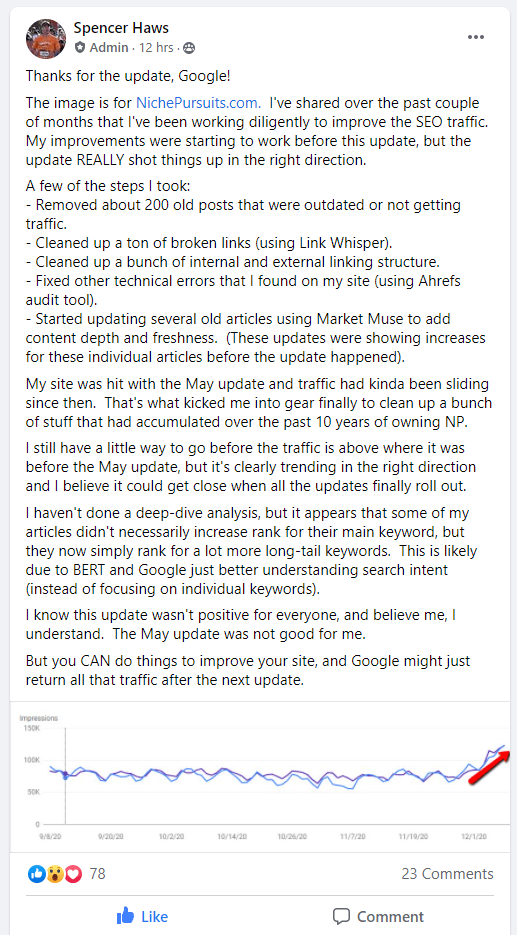

Spencer Haws from NichePursuits.com published on his FB group recently that his site was doing very well since the December core update. Updating and repurposing his current articles have played a big role in his current growth.

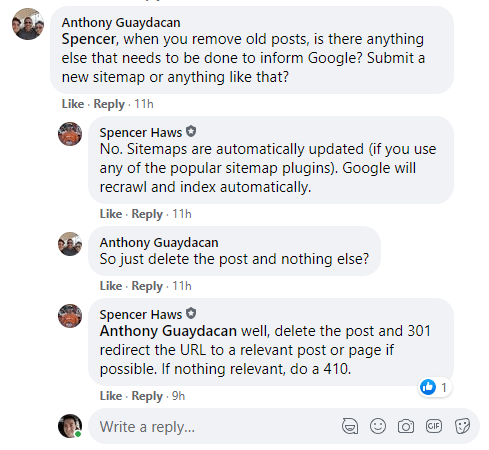

If you have contents that cannot be repurposed, redirect it with a redirect 301 to the most relevant article. If you don’t find any relevant article, just delete your article and set a 410 redirect. This is how Spencer Haws did it, and it’s also the best practice according to my researches.

3. Apply E-A-T to Your Contents

E-A-T is taking more place in the Google algorithm now, we cannot deny it. The meanings of E-A-T are Expertise, Authoritativeness, Trustworthiness.

To increase your website E-A-T, you have different options:

3.1 Add the author name to your articles

If you have just one author on your website, link the author’s name to your about page, write a good 300-400 words text about him and link out to his Facebook, Twitter, LinkedIn profiles, etc…

If you have multiple authors on your website, activate the author pages indexation and be sure to link out to your author social accounts for all authors.

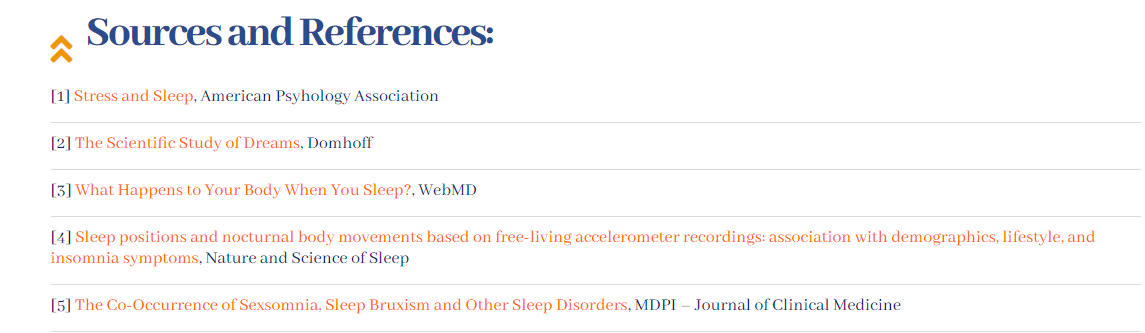

3.2 Add a resource links section at the ends of your article

Prove to Google that your article is well researched, add a list of your reference and finds at the ends of your article.

Fix Your Website Structure

The next important step is to optimize your website for crawlability/indexability and get all your structure issues fixed.

1. Run a full audit on your website

Start to run a full scan on your website with tools like SEMrush, Ahrefs, Screaming Frog, or Sitebulb. I suggest using different tools, to get a better overview of your website issues.

Start with SEMrush, once you have fixed all your onpage issues, do another full scan with Ahrefs, and so on.

Here are in my opinion, the most important things to fix:

1.1 Avoid having orphan pages

Having orphan pages is just showing to Google that your site has content that you don’t care about. You need to show that your entire site is valuable, including all its content. Be sure to have an internal link(s) pointing to all your pages.

1.2 Internal links pointing to broken pages

You have deleted/repurposed some articles? Don’t forget to repurpose your internal links to the new location, or simply delete them.

1.3 Traffic to error 404 pages

Check the pages where you have traffic using analytics software like Google Analytics or Clicky and be sure that you don’t have pages that receive traffic and goes to an error 404 page. If this is the case, it hurt your UX, take note of those pages and be sure to redirect them to the proper location.

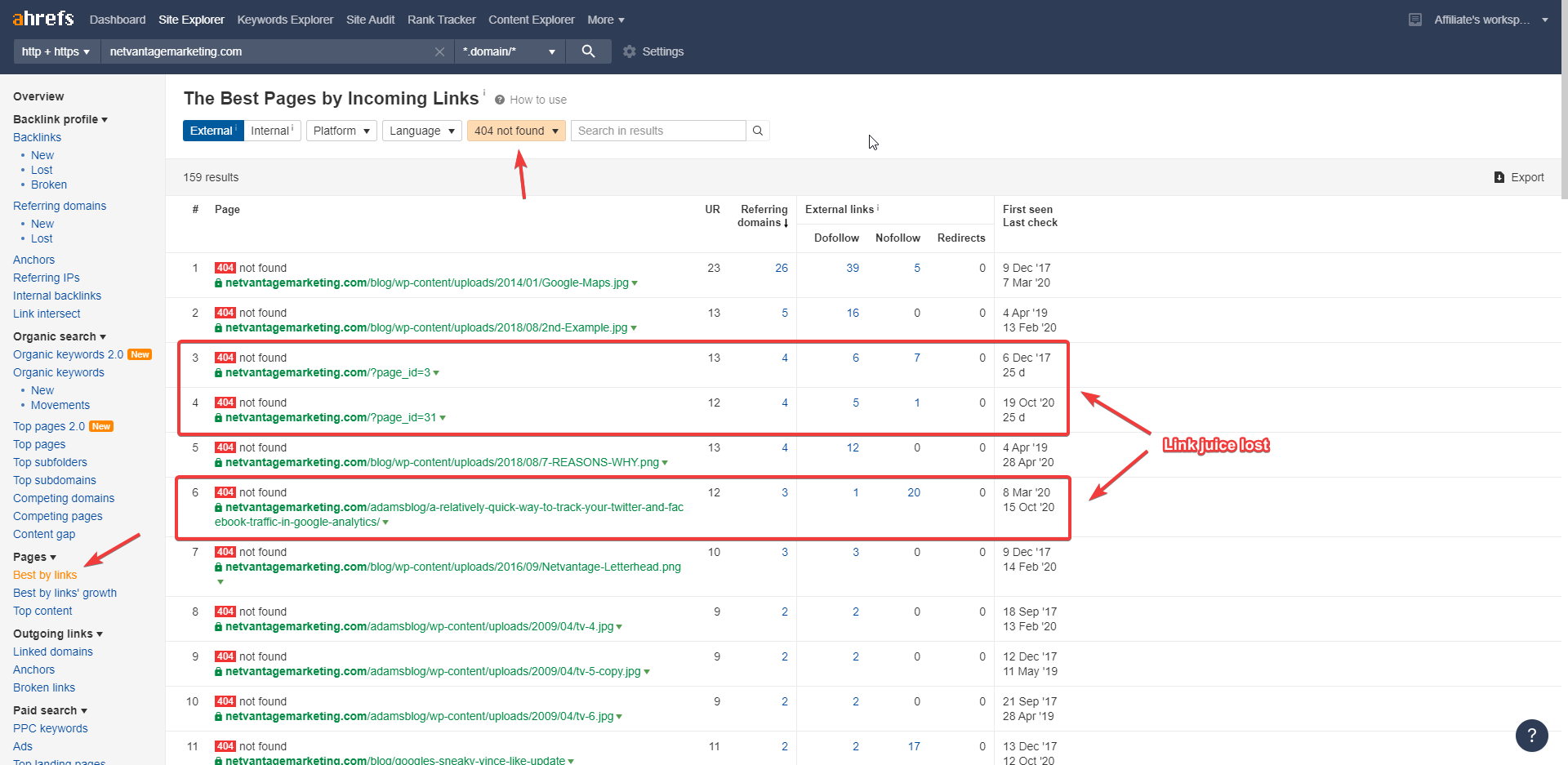

1.4 Recover your link juice

A big mistake that a lot of peoples are doing is to leave their pages with links redirecting to an error 404 page. It is so easy to detect those pages using Ahrefs and the “Best by links” feature. Find the pages that have backlinks and redirect them to a relevant page. Don’t let all this link juice go over your site.

2. Optimize for crawlability

The crawling budget allowed by Google is something that has caught my attention a lot this year. If you want Google to give love to your site, you need to make it easy for him to crawl and index your website.

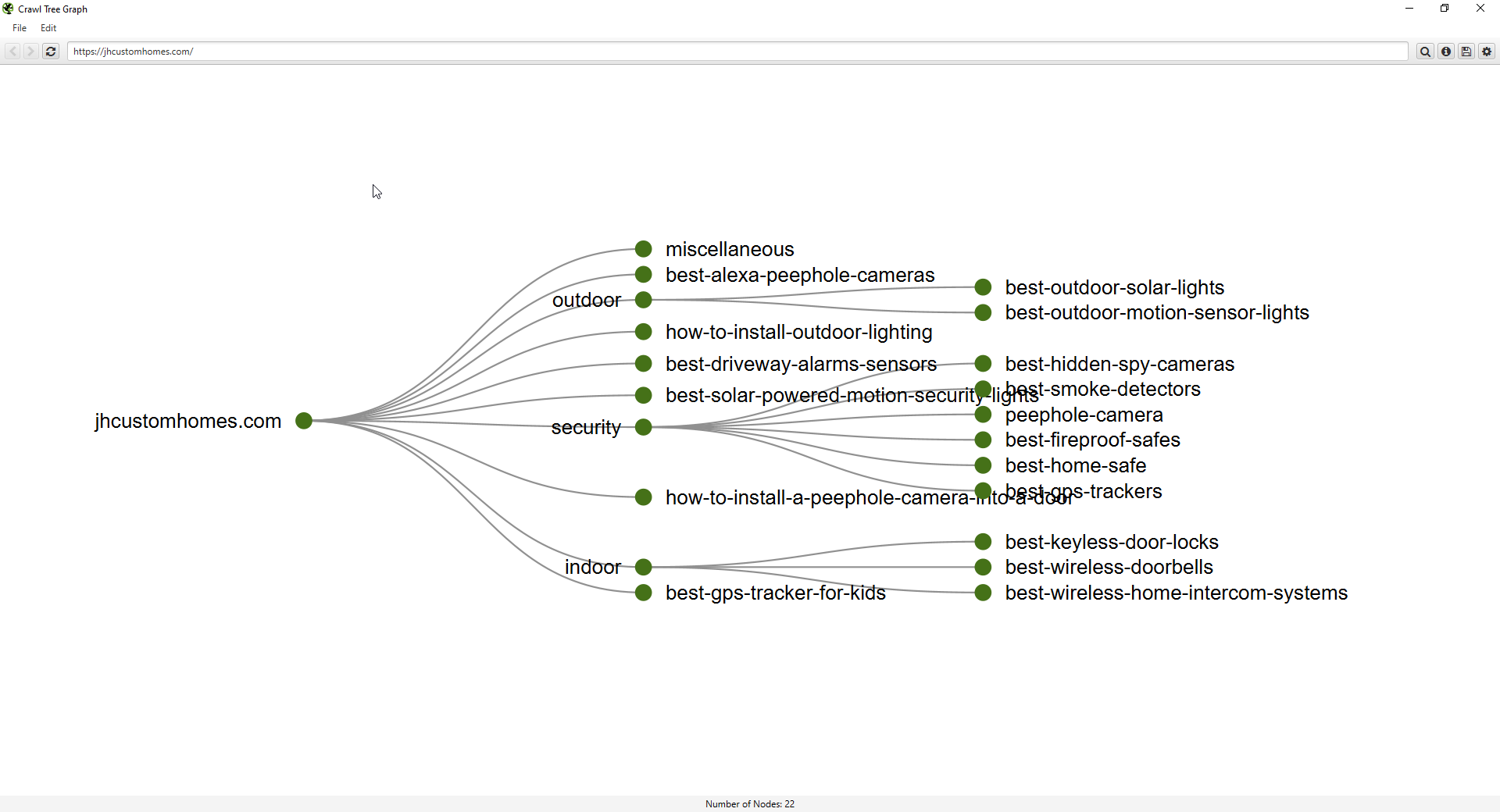

The best tool I have found to see your crawlability map is Screaming Frog. I suggest you run a full scan of your website and once completed, launch the Crawl Tree Graph.

I have found a great small site, that uses IMHO, the best structure for crawlability and indexability. The site is JHCustomHomes.com. Like you can see, the menu of the site link back to the site categories (silos), this way the link juice flows easily through all articles of the site and making the crawlability really easy for Google.

I have seen a lot of peoples recommending to noindex the category pages but I disagree with this strategy. I recommend you to read this article and make your own opinion.

3. Optimize for indexability

Now that you have made it easy for Google to crawl your site, it’s time to make it easy for him to find your new content.

For this, I just use a simple trick, I add a section “Latest posts” on my homepage. The homepage is the page the most crawled by Google because it’s the first level of depth.

If your website has too many pages and exceeds your crawling budget, it can take multiple days, even weeks before Google finds your new content and decide to index it.

Having your latest articles appearing on the homepage makes the indexation 3x-5x faster in my opinion.

I have seen a lot of peoples recommending to noindex the category pages but I disagree with this strategy. I recommend you to read this article and make your own opinion.

Link Building Done Right!

OKAY! Let’s talk.

Like most of you know… The link-building process is my favorite thing! So yes, believe it, I take this really seriously!

The good thing is that I have a great circle of friends and we talk a lot… So it’s great to know what worked for others and what doesn’t!

The good news is that backlinks still work and they still are one of the biggest ranking factors out there! HOWEVER, I have to admit that the game has changed…

It looks like we are in an era of power, where only authority seems to matter. Google gives more importance to the domain authority than ever. The websites that had a lot of backlinks from low authoritative sites got hit badly by this algorithm update, the ones that had a strong backlinks profile, got a nice boost!

The bad news is that, unfortunately, we will have to rely more on the DR metric, thus, spending more on our backlinks. The reason is that the backlinks industry pricing is mostly based on the DR (Ahrefs) metric. The webmasters charge more based on the DR of their domain.

At least, Ahrefs have updated their DR calculation recently so it’s a bit more accurate now. It makes it more difficult to manipulate it now.

Guys like Charles Floate also think that low authority backlinks have been highly devalued with the recent algorithm. He published a video recently talking about this.

I have to agree with him, according to what I have seen while doing my reverse engineering sessions, the websites with a higher ratio of high DR backlinks rank better now.

What happen with TA (Traffic Authority) metric?

I still believe that using the Traffic Authority system is an excellent method to gauge the authority of a website. However, it will need to be adjusted to continue to be efficient.

I have made big changes to my guest post packages, from now, it won’t be possible anymore to order any backlinks based on the TA system alone. But the good news is that you will still be able to take benefits of the traffic authority system but being combined with the DR metric. It will just make them even better as you will get the best of the 2 worlds.

To see the new packages and pricing, just click here: https://igpublishing.spp.io/order/E215ML

Anchor text selection

Google has improved a lot their algorithm. Google has confirmed that when the long-phrase is used for the anchor, they can understand better and allocate more importance/relevancy to your link. It’s why I highly suggest using long phrases from now.

For example: if your target URL is https://seospider.com/best-rank-tracker-software/

Rather target exact match, use long-phrase anchors like:

- I’ve bought a rank tracker tool from this list

- where I have found my tool to track my keywords

- those are recommended by SEOSpider.com

- Grabbed one from this list and I don’t regret

- etc..

And of course, to keep your anchor distribution natural, use generic anchors like “see here, click here, etc..”

A lot of peoples have started to avoid using exact match keywords years ago, myself included, but from now, I will put more effort into the “natural side” for the anchor text selection.

Competitors reverse engineering

Do not hesitate to look at what your top competitors are doing, it’s always the key here… if the top10 competitors average is 5 links… Don’t build 20 links.

Create a link-building plan for each target, take the time to analyze your competitors. Look at their anchor texts, the number of internal links they have, etc… Don’t replicate them, just do better!

If your competitors have 5 links, try to build 1 or 2 more, and try to get stronger links.

Conclusion

We all agree that it’s still too early to have a final conclusion on this algorithm update, however, from my side, the ranking is stable for enough days to let me conclude which direction Google is taken.

I really believe that E-A-T is a big part of the cake. We have to show Google that we are a legit resource and that we can be trusted.

I am confident enough to say that if you have been hit by this recent algorithm update if you work on all the points enumerated above, you should recover.

A link audit could be a good idea too, if you need it, the only man that you need is Rick Lomas from Indexicon.com.

Good luck everybody, I hope the best for everyone!